Reverse Engineering Protobuf Definitions From Compiled Binaries

Mar 3rd, 2024 | 14 minute readA few years ago I released protodump, a CLI for extracting full source protobuf definitions from compiled binaries (regardless of the target architecture). This can come in handy if you’re trying to reverse engineer an API used by a closed source binary, for instance. In this post I’ll explain how it works, but first, a demo:

How does it work?

To understand how it works, lets take a look at a small test.proto example:

syntax = "proto3";

option go_package = "./;helloworld";

message HelloWorld {

string name = 1;

}

If we compile this with protoc to golang we’ll get some golang code that defines the object type, creates getters and setters for the name field, and so on. We can use it as follows:

func main() {

obj := helloworld.HelloWorld{

Name: "myname",

}

fmt.Printf("%s\n", obj.GetName())

}

$ go run main.go

myname

However protobuf also supports runtime reflection. Rather than invoking the getter method at compile time, we can fetch the list of fields and query them at runtime:

func main() {

obj := helloworld.HelloWorld{

Name: "myname",

}

fields := obj.ProtoReflect().Descriptor().Fields()

for i := 0; i < fields.Len(); i++ {

field := fields.Get(i)

value := obj.ProtoReflect().Get(field).String()

fmt.Printf("Field %d has value '%v'\n", i, value)

}

}

$ go run main.go

Field 0 has value 'myname'

How can the generated golang code know the field names and types at runtime like this? The protoc compiler stores a whole copy of the protobuf definition in the generated output code. Here is the complete protoc output for our HelloWorld message type, and in particular, lines 72-78 store this protobuf definition:

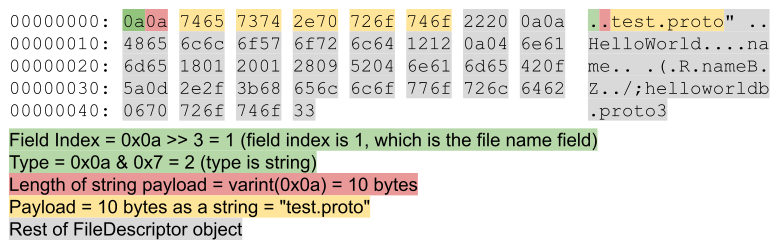

var file_test_proto_rawDesc = []byte{

0x0a, 0x0a, 0x74, 0x65, 0x73, 0x74, 0x2e, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x22, 0x20, 0x0a, 0x0a,

0x48, 0x65, 0x6c, 0x6c, 0x6f, 0x57, 0x6f, 0x72, 0x6c, 0x64, 0x12, 0x12, 0x0a, 0x04, 0x6e, 0x61,

0x6d, 0x65, 0x18, 0x01, 0x20, 0x01, 0x28, 0x09, 0x52, 0x04, 0x6e, 0x61, 0x6d, 0x65, 0x42, 0x0f,

0x5a, 0x0d, 0x2e, 0x2f, 0x3b, 0x68, 0x65, 0x6c, 0x6c, 0x6f, 0x77, 0x6f, 0x72, 0x6c, 0x64, 0x62,

0x06, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x33,

}

This byte array stores the field names and types, messages, services, enums, options, and so on. It’s a little meta because the format of this object is itself a protobuf object, called a FileDescriptor, and is encoded into a byte array using the protobuf wire format.

With this knowledge in hand, the strategy for extracting protobuf definitions from binaries becomes the following:

- Iterate over the contents of a program binary

- Find sequences of bytes that look like they might be FileDescriptors, such as the example above

- Extract these bytes and decode them into “.proto” source definitions

Finding bytes that look like FileDescriptors

To find FileDescriptors I take the naive approach of simply searching the program binary for the ascii string “.proto”. The FileDescriptor object has a field for the file name of the proto file it was compiled from, so if engineers are naming their files with a “.proto” extension then it’ll be present in the output.

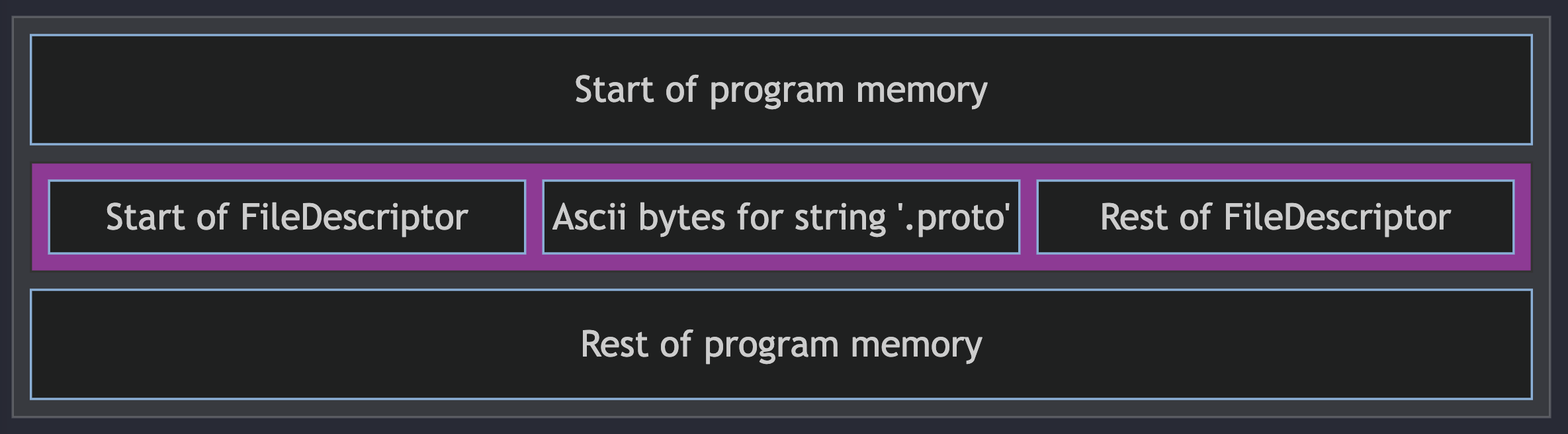

We can imagine a program binary as a sequence of bytes laid out as follows:

So when we find a “.proto” string, to capture the entire FileDescriptor (the entire purple segment) we need to first move backward to the start of the object and then read until the end.

To determine how far back to read, it’s helpful to understand the protobuf wire format. Protobuf makes heavy use of variable-length integers (“varints”), which allow encoding unsigned 64-bit integers using anywhere between 1-10 bytes (in little-endian), with smaller integers using fewer bytes. When such a varint is encountered, if the most significant bit of a byte is set then this indicates that the following byte is also part of the varint:

# Value is 8:

00001000

# ^ MSB is not set, end of varint

# Value is 150:

10010110 00000001

# ^ MSB is set, varint continues to next byte

# ^ MSB is not set, end of varint

# How to calculate 150:

# 10010110 00000001 // Original inputs

# 0010110 0000001 // Drop continuation bits

# 0000001 0010110 // Convert to big-endian

# 00000010010110 // Concatenate

# 128 + 16 + 4 + 2 = 150 // Interpret as an unsigned 64-bit integer

Protobuf Messages are encoded using a “Tag-Length-Value” scheme, where a message with some fields is encoded as the following structure, repeated:

- A varint for the index and type of the field (the “tag”)

- This is defined as the field number of a field within a message, bit-shifted left 3 times and OR-ed with the type. Protobuf defines 6 types, with string types having value 2

- A varint for the byte-length of the payload

- The payload itself

and this gets repeated for every field in the message. Using the byte array from the HelloWorld example above, we have the following structure:

So the search strategy is:

- Loop over program memory looking for the ascii string “.proto”. When we find one:

- Assume that this is the start of an encoded file descriptor object. Move back to the previous

0x0abyte (the tag for the file name field) - If the file name is exactly 10 bytes long, move back 1 byte further (otherwise the

0x0abyte we found is actually the string length and not the tag) - Now that we’re at the beginning of the FileDescriptor object, keep consuming bytes so long as they are a valid protobuf wire encoding

- Take all the bytes we’ve consumed and attempt to unmarshal them into a FileDescriptor object

- If successful, convert the FileDescriptor object to a source “.proto” file and output it

- Assume that this is the start of an encoded file descriptor object. Move back to the previous

To convert the FileDescriptor object to a source “.proto” file, I couldn’t find any existing code in the protoc compiler to do that so I wrote my own implementation.

Finally, for unit testing, I wrote a small harness that takes proto files as input, executes the protoc compiler on them, takes that FileDescriptor output and reserializes it as proto, and checks that the input proto and output proto are byte-for-byte identical.

Shortcomings

There are a number of limitations to this approach. First and foremost, everything written above is specific to Google’s protoc compiler; it does not apply to the more general protobuf specification. If someone uses a non-protoc compiler, it may have a completely different mechanism for implementing reflection.

Even when using protoc:

- People can name their files with an extension other than “.proto”

- They can obfuscate the file descriptor in program memory

- Protobuf explicitly does not guarantee field ordering on the wire format, so moving the file name field to a different location other than the start of the FileDescriptor would break the scanning

Additionally many protobuf compilers offer the option to suppress this embedding completely (at the cost of losing runtime reflection capabilities).

Despite all these shortcomings, I’ve found that the 99% of binaries I examine use protoc and don’t have any obfuscation, and all their protobuf definitions are extracted in full.

P.S. If you enjoy this kind of content feel free to follow me on Twitter: @arkadiyt