Quantifying Untrusted Symantec Certificates

Feb 4th, 2018 | 17 minute readI was reading Hackernews the other day when I came upon the following tweet:  which made me curious to quantify exactly how many and which sites will have their trust removed. This blog post answers these questions by writing a scanner to detect bad Symantec certificates (using the same logic Google Chrome uses), and running it against the Alexa Top 1 Million sites. But first, some context.

which made me curious to quantify exactly how many and which sites will have their trust removed. This blog post answers these questions by writing a scanner to detect bad Symantec certificates (using the same logic Google Chrome uses), and running it against the Alexa Top 1 Million sites. But first, some context.

Why, and when, is Google distrusting Symantec TLS certificates

Symantec is a certificate authority, capable of issuing certificates for any website. As with all CAs, they have an incredible amount of power over the trust ecosystem of the internet, and must follow a strict set of operational requirements (called the Baseline Requirements). These requirements are set forth by the CA/Browser Forum, a consortium of certificate authorities and browser vendors, in order to hold CAs responsible and accountable for the trust we place in them.

However, Symantec has had a long history of TLS/PKI incidents. Two of the more notable incidents are:

In September 2015 they misissued ~2600 valid certificates that were never requested, including certificates for

google.comandwww.google.com. As a result of this incident Google required all Symantec certificates to be published into the Certificate Transparency logs. Symantec also fired the employees who issued the Google certificates, due to backlash and perhaps pressure from Google.In January 2017, it came to light that Symantec had misissued at least 30,000 certificates over a period of several years. There’s a long public thread on the mozilla.dev.security.policy group with all the details and fallout.

As a result of these incidents, in September 2017 Google announced a timeline for completely distrusting Symantec certificates, which meant certain death for Symantec’s PKI business. Symantec was understandably displeased and published their own open letter in response, objecting to Google’s actions. However in the end Symantec relented, and decided to completely sell off its PKI business to Digicert, rather than rebuilding it from scratch in order to regain browser trust.

Starting with the release of Chrome 66 on April 17th 2018, Symantec certificates issued before June 1st 2016 or after December 1st 2017 will no longer be considered trusted. The 18 month interim window is intended to help website operators transition to new certificates, and Chrome 70 will fully distrust all Symantec certificates (scheduled for release around October 23rd 2018).

That brings us to today. As we saw with the opening tweet, if you’re running the canary build of Chrome you can already see the effects of this change - quite a few websites will have their certificates rejected by Chrome and bring up “Your connection is not private” interstitials. Now let’s quantify exactly how many.

Scanning for Symantec certificates

To scan for bad Symantec certificates, we first need to figure out how Chrome is detecting them. For that we go straight to the source - most of Chrome’s TLS validation code lives inside src/net/cert/*, and in particular inside cert_verify_proc.cc. It contains code to check for weak keys and cipher algorithms, weak hash signatures, name constraints validation, OCSP validation, and all the other checks you expect to happen before getting the “green lock” on your address bar.

This file also contains the logic to check for Symantec certificates. In plain english it does the following:

- Calls

IsLegacySymantecCertto check if any of the public key hashes in the certificate chain match a blacklist of 58 Symantec public key hashes (defined in symantec_certs.cc), and also are not on a whitelist of 11 allowed public key hashes - If a blacklisted public key hash is found in the certificate chain, call

IsUntrustedSymantecCertto check that the leaf certificate for the website being viewed has anot_beforestart time that is either before2016-06-01or after2017-12-01 - If these conditions are met, mark the connection as untrusted

For the curious here’s the relevant code, though it’s not necessary to understand it to follow this post:

int CertVerifyProc::Verify(/* ... */) {

// ... code snipped ...

// Distrust Symantec-issued certificates, as described at

// https://security.googleblog.com/2017/09/chromes-plan-to-distrust-symantec.html

if (!(flags & CertVerifier::VERIFY_DISABLE_SYMANTEC_ENFORCEMENT) &&

IsLegacySymantecCert(verify_result->public_key_hashes)) {

if (IsUntrustedSymantecCert(*verify_result->verified_cert)) {

verify_result->cert_status |= CERT_STATUS_AUTHORITY_INVALID;

// ... code snipped ...

}

}

}

bool IsUntrustedSymantecCert(const X509Certificate& cert) {

// ... code snipped ...

// Certificates issued on/after 2017-12-01 00:00:00 UTC are no longer

// trusted.

const base::Time kSymantecDeprecationDate =

base::Time::UnixEpoch() + base::TimeDelta::FromSeconds(1512086400);

if (start >= kSymantecDeprecationDate)

return true;

// Certificates issued prior to 2016-06-01 00:00:00 UTC are no longer

// trusted.

const base::Time kFirstAcceptedCertDate =

base::Time::UnixEpoch() + base::TimeDelta::FromSeconds(1464739200);

if (start < kFirstAcceptedCertDate)

return true;

return false;

}We can implement these same checks in a Ruby scanner to quickly check if a host is going to be affected by the upcoming changes. We need to:

- open a connection to a host and extract the certificate chain

- compare the public key hashes to the blacklist and whitelist mentioned above

- compare the leaf certificate

not_beforedate to the2016-06-01and2017-12-01cutoffs

When you make a http connection using Ruby’s standard library, the certificate chain is available as the #peer_cert_chain method on the SSLSocket socket. The connection’s socket is private but it’s simple to extract it anyway:

def get_chain(host)

uri = URI("https://#{host}")

Net::HTTP.start(uri.host, uri.port, use_ssl: true) do |http|

return http.instance_variable_get(:@socket).io.peer_cert_chain

end

endThis returns an array of certificates, with each entry being a PEM-encoded string. When establishing a connection it’s common for the server to omit the root certificate from the chain, returning only the leaf and intermediate(s) - the full version of this function adds some handling around this to make sure we get a complete chain.

Then to check if a host is using a bad certificate, we calculate the certificate public key hashes, compare them with the blacklist and whitelist, and also check the not_before date against the cutoffs:

def check_host(host)

chain = get_chain(host)

public_key_hashes = chain.map do |cert|

cert = OpenSSL::X509::Certificate.new(cert)

Digest::SHA256.hexdigest(cert.public_key.to_der)

end

if (SYMANTEC_BLACKLIST & public_key_hashes).length > 0 &&

(SYMANTEC_MANAGED & public_key_hashes).empty? &&

(SYMANTEC_EXCEPTIONS & public_key_hashes).empty? &&

(chain.first.not_before < Time.at(1464739200).utc || # 2016-06-01 00:00:00 UTC

chain.first.not_before >= Time.at(1512086400).utc) # 2017-12-01 00:00:00 UTC

puts "#{host} uses bad Symantec certificate"

end

endPutting it all together

Now we can download the Alexa Top 1 Million sites and run the scanner against every host. Since the Alexa Top 1M trims subdomains, we also check for the www. version of any host on the list (if it resolves via DNS). If we were to check for more known subdomains from the Certificate Transparency logs we’d get even more accurate results but it would take longer to scan, so we use this as a proxy. On my personal laptop with 16 worker processes the entire scan took 11 hours to complete.

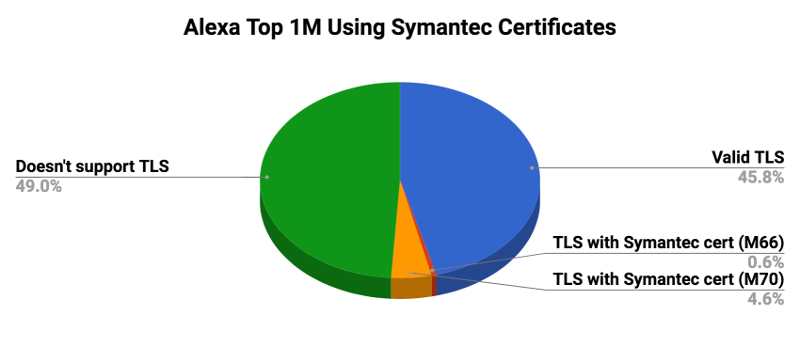

The original 1 million hosts were expanded to ~1,980,000 hosts (by adding www. domains). Of the ~1,010,000 that supported TLS, ~10,000 will get distrusted with Chrome 66 in April and ~90,000 will get distrusted with Chrome 70 in October:

| Category | Count |

| Doesn’t support TLS | 968,602 |

| Valid TLS | 905,901 |

| TLS with Symantec cert (M66) | 11,510 |

| TLS with Symantec cert (M70) | 91,627 |

Overall the issue is not hugely widespread, but there are some notable hosts still using Symantec certificates that will become distrusted in April:

- icloud.com

- pagerduty.com

- wechat.com

- blackberry.com

- citirewards.com

- tesla.com

- coffeemeetsbagel.com

and others - you can download the full list of hosts which will become distrusted in April (Chrome 66) and distrusted in October (Chrome 70). All the code and supporting files for this scanner are available in this github repo.

P.S. If you enjoy this kind of content feel free to follow me on Twitter: @arkadiyt

Changelog

02/06/2018: A previous version of this post undercounted the total number of affected hosts due to:

- not handling missing root certificates correctly

- not checking subdomains, which are trimmed from the Alexa Top 1M

Thanks to Ashley Pinner and Ryan Sleevi for their feedback.